We hasten to reassure the reader that our paper explains all the jargon involved, and the proofs of the claims are given in full: For all these differential equations, I describe conditions under which relative information decreases.

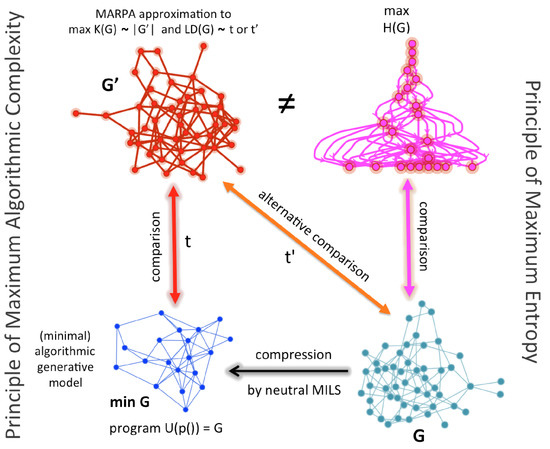

In this review, we describe various ways in which a population or probability distribution evolves continuously according to some differential equation. The relative information is also close to the expected number of extra bits required to code messages distributed according to the probability measure using a code optimized for messages distributed according to. However, relative information can be singled out by a number of characterizations, including one based on ideas from Bayesian inference. There are many other divergences besides relative information, some of which we discuss in Section 6. A more cautious description of relative information is that it is a divergence: a way of measuring the difference between probability distributions that obeysīut not necessarily the other axioms for a distance function, symmetry and the triangle inequality, which indeed fail for relative information. We put the word ‘true’ in quotes here, because the notion of a ‘true’ probability distribution, which subjective Bayesians reject, is not required to use relative information. If however we started with the hypothesis that the coin always lands heads up, we would have gained no information. For example, if we start with the hypothesis that a coin is fair and then are told that it landed heads up, the relative information is, so we have gained 1 bit of information. Intuitively, is the amount of information gained when we start with a hypothesis given by some probability distribution and then learn the ‘true’ probability distribution. The reason is that the Shannon entropyĬontains a minus sign that is missing from the definition of relative information. We use the word ‘information’ instead of ‘entropy’ because one expects entropy to increase with time, and the theorems we present will say that decreases with time under various conditions. Given two probability distributions and on a finite set, their relative information, or more precisely the information of relative to, is Most of these results involve a quantity that is variously known as ‘relative information’, ‘relative entropy’, ‘information gain’ or the ‘Kullback–Leibler divergence’. In this review, we explain some mathematical results that make this idea precise. Closely related quantities such as free energy tend to decrease. A chemical reaction approaching equilibrium.Īn interesting common feature of these processes is that as they occur, quantities mathematically akin to entropy tend to increase.Random processes such as mutation, genetic drift, the diffusion of organisms in an environment or the diffusion of molecules in a liquid.A population approaching an evolutionarily stable state.This can occur on a wide range of scales, from large ecosystems to within a single cell or organelle. Nonetheless, it is important in biology that systems can sometimes be treated as approximately closed, and sometimes approach equilibrium before being disrupted in one way or another. Biological systems are also open systems, in the sense that both matter and energy flow in and out of them. Life relies on nonequilibrium thermodynamics, since in thermal equilibrium there are no flows of free energy. In the paper we advocate using the term ‘relative information’ instead of ‘relative entropy’-yet the latter is much more widely used, so I feel we need it in the title to let people know what the paper is about!

We’d love any comments or questions you might have.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed